Is My SaaS Ready for AI? A Self-Assessment for B2B Founders #

You Asked ChatGPT This Question. Here's the Real Answer. #

If you're a SaaS CEO in 2026 and you haven't asked "is my product ready for AI?" then you're probably the only one. Gartner predicts 40% of enterprise apps will have task-specific AI agents by end of 2026, up from under 5% in 20251. That's an 8x jump in a single year. The pressure is real.

But here's what nobody tells you: the question itself is wrong. "AI-ready" isn't a binary state. It's not something you either have or don't. It's a spectrum, and where you sit on that spectrum determines whether your AI initiative ships in weeks or stalls for quarters.

I've watched dozens of B2B SaaS companies try to add AI. Some shipped in days. Others burned six months and abandoned the project. The difference wasn't budget, team size, or which model they picked. It was readiness, and most founders don't know how to measure it. (Try our customization readiness quiz for a quick diagnostic.)

Let's fix that.

Key Takeaways

- 63% of organizations lack AI-ready data management practices (Gartner, 2025)

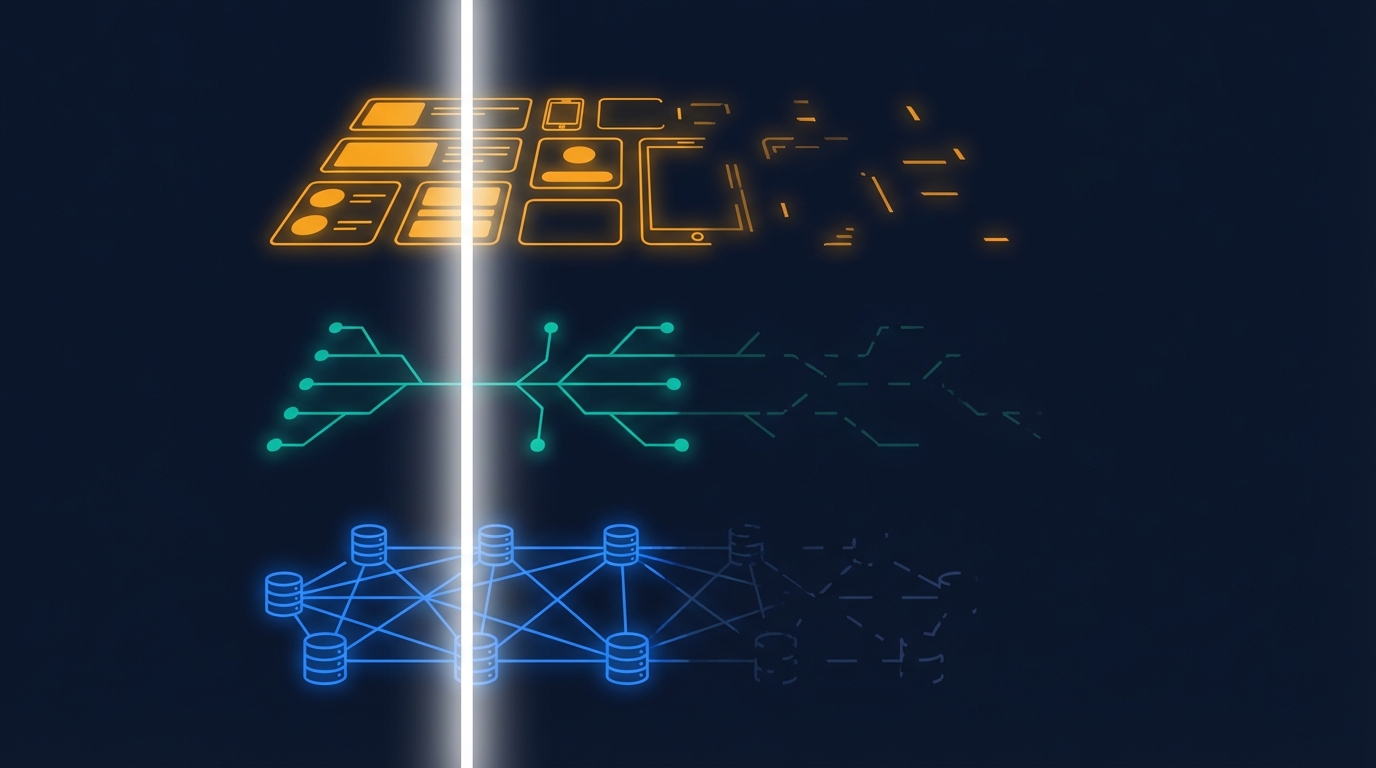

- AI readiness depends on three layers: your data, your APIs, and your UX patterns

- 95% of enterprise AI pilots deliver zero measurable return, mostly due to poor preparation (MIT Media Lab, 2025)

- The fastest path to AI isn't rebuilding your product. It's adding a customization layer on top.

What Does "AI-Ready" Actually Mean for a SaaS Product? #

63% of organizations either lack or are unsure if they have the right data management practices for AI, according to a Gartner survey of 1,203 data management leaders2. Through 2026, Gartner predicts organizations will abandon 60% of AI projects unsupported by AI-ready data. That stat should make every SaaS CEO pause before greenlighting an AI initiative.

"AI-ready" for a SaaS product means three things are true simultaneously. Your data layer is clean and accessible. Your API surface is well-documented and composable. And your UX patterns can accommodate AI-generated outputs without confusing users.

Miss any one of these three, and your AI project stalls.

The Data Layer: Can AI Actually Use Your Data? #

Most SaaS products store data. Fewer make that data usable by AI. The difference matters.

AI-ready data means your records are normalized, consistently formatted, and queryable without manual cleanup. It means your data model has clear relationships between entities. A work order connects to an asset which connects to a location which connects to a team.

If your engineers need three days to pull a clean dataset for any new feature, your data isn't AI-ready. If your customer data lives in six different tables with inconsistent naming conventions, your data isn't AI-ready.

What does AI-ready look like in practice? A single API call that returns a customer's work orders, sorted by priority, with the related asset info attached. That's it. If you can do that today, your data layer is further along than most.

The API Surface: Can External Systems Talk to Your Product? #

Your AI features will need to read data, write data, and trigger actions inside your product. That means your API surface needs to be more than a collection of CRUD endpoints bolted on as an afterthought.

AI-ready APIs have three properties. They're well-documented (not just for humans, but machine-readable with OpenAPI specs). They're composable (you can chain multiple endpoints to complete a workflow). And they're permissioned (every call respects the same auth and access rules as your main UI).

How complete is your API coverage right now? If your web app can do something that your API can't, that's a gap AI will hit immediately.

The UX Layer: Can Your Interface Handle AI Output? #

This is the layer most founders forget entirely. Your users need to see, trust, and act on what AI produces. That requires UX patterns your product may not have yet.

Does your app support inline previews? Can it show a generated draft that the user approves before it takes effect? Does it handle partial or uncertain outputs gracefully, rather than forcing binary success/failure states?

If your UX only supports deterministic, form-based workflows, AI outputs will feel foreign and untrustworthy to your users. That kills adoption faster than any technical limitation.

How Do You Score Your Own AI Readiness? #

McKinsey's 2025 Global Survey on AI found that nearly all organizations are using AI in some form, but most are still in the experimentation or piloting phase3. The gap between "we're experimenting" and "we're shipping" is where readiness assessment lives.

Here's a simple framework. Score your product across five dimensions, 1-5 each. Be honest. This isn't a test you want to pass by inflating numbers.

1. Data Accessibility (1-5) #

Can you pull any customer's core data through a single, authenticated API call within 200ms? Score 1 if your data requires manual exports. Score 5 if your APIs return structured, relational data with sub-second latency.

2. API Coverage (1-5) #

What percentage of your product's actions are available through APIs? Score 1 if less than 20% of actions have API equivalents. Score 5 if over 90% of user-facing actions are API-accessible.

3. Documentation Quality (1-5) #

Do you have machine-readable API docs (OpenAPI/Swagger)? Score 1 for no documentation. Score 5 for auto-generated, versioned OpenAPI specs with examples and error codes.

4. Auth and Permissions (1-5) #

Does your API enforce the same access rules as your UI? Score 1 if APIs bypass permission checks. Score 5 if every API call respects row-level, role-based access control identical to the UI.

5. UX Flexibility (1-5) #

Can your interface display AI-generated content alongside user-created content? Score 1 if everything is hardcoded forms. Score 5 if your UI supports dynamic layouts, previews, and user-approval workflows.

Your total: out of 25

- 20-25: You're ready. Start shipping AI features this quarter.

- 15-19: Close. Fix the weakest dimension first, then go.

- 10-14: Foundation work needed. Budget 2-3 months of prep before AI development.

- Below 10: Major gaps. Consider a platform approach rather than building from scratch.

That last category is more common than you'd think. And it's not a death sentence. It just means building AI in-house isn't your fastest path.

What Mistakes Do CEOs Make When Starting AI Initiatives? #

95% of enterprise AI initiatives deliver zero measurable return, according to a 2025 MIT Media Lab study that reviewed over 300 publicly disclosed initiatives4. That's not a typo. Ninety-five percent. The failure rate for AI pilots is higher than almost any other category of enterprise technology investment.

Why? Because CEOs keep making the same five mistakes.

Mistake 1: Starting with the Model, Not the Problem #

"We need to add GPT-4 to our product." No. You need to identify which customer workflow is broken and whether AI can fix it better than traditional software. The model is the last decision, not the first.

We've seen SaaS companies spend months evaluating LLMs before they've even decided what the AI should do. That's like choosing a database before you've designed your data model.

Mistake 2: Treating AI as a Feature Instead of an Architecture Decision #

Adding a chatbot to your existing UI is a feature. Building a system that generates per-customer workflow apps is an architecture. Most CEOs greenlight feature-level AI work and then wonder why it doesn't move retention metrics.

The features that actually reduce churn are the ones that personalize the product to each customer's specific workflow. That requires architectural thinking, not prompt engineering.

Mistake 3: Assigning AI to a Side Team #

"Let's spin up a small AI team and see what they come up with." This approach almost always fails. AI touches your data layer, your API surface, your security model, and your UX. A side team without authority over those systems will hit blockers constantly.

The most successful AI initiatives we've seen have executive sponsorship and cross-functional authority from day one.

Mistake 4: Ignoring Data Readiness Until It's Too Late #

Gartner's prediction bears repeating: 60% of AI projects will be abandoned due to lack of AI-ready data2. Most CEOs assume their data is fine because the product works. But "works for human users clicking through a UI" and "works for AI consuming via API" are two very different standards.

Mistake 5: Building Everything In-House #

Not every AI capability needs to be built from scratch. Your engineering team's time is finite. Every month spent building AI infrastructure is a month not spent on your core product roadmap. Sometimes the fastest path is embedding a platform that already solves the readiness problem.

What Are the Fastest Paths to Adding AI? #

Gartner predicts agentic AI could drive 30% of enterprise application software revenue by 2035, up from 2% in 20251. The market is moving fast. So what's the quickest way to ship?

There are three paths, and they're not mutually exclusive.

Path 1: Single-Shot AI Features (2-4 Weeks) #

If your readiness score is 15+, you can ship extraction-level AI features quickly. Photo-to-form, text summarization, smart categorization. These require a single API call to an LLM, a validation layer, and a UI component to display results.

These are table-stakes features. They won't differentiate your product, but they'll check the box that buyers increasingly expect. Think of them as the minimum viable AI.

Path 2: Conversational AI with Tool Use (2-3 Months) #

If your readiness score is 18+, you can build an AI assistant that reads and writes data through your APIs. This is the chat-based copilot pattern, where users ask questions in natural language and the AI takes actions inside your product.

This requires good API coverage, strong auth, and a streaming UI pattern. It's harder to build than Path 1 but dramatically more useful for power users.

Path 3: Embed an AI App Platform (2-4 Weeks) #

Here's the path most founders don't consider. Instead of building AI features one by one, you embed a platform that lets your customers build their own AI-powered apps on top of your product.

This is what Gigacatalyst does. It's a white-label AI app builder that sits on top of your existing APIs and data model. Your customers describe the workflow they need in plain English, and Catalyst generates a working app, connected to real data, respecting your existing security model, deployed the same day.

The readiness bar for embedding a platform is actually lower than for building AI features yourself. You need decent APIs and a clear data model. Catalyst handles the AI generation, the UX, the marketplace, and the governance layer. You don't need to re-architect your product.

A YC-backed CMMS platform embedded Catalyst and saw 90.8% adoption across 946 users, with 89% day-30 retention5. The reason adoption was so high: every customer got apps tailored to their specific workflow, not a generic AI feature that worked the same for everyone.

That's the core insight. AI readiness isn't just about whether your product can run an AI model. It's about whether your product can deliver AI value that's specific to each customer. A platform approach gets you there faster than building from scratch.

How Long Does AI Readiness Actually Take? #

BCG reports that 74% of companies struggle to scale AI value due to data governance issues6. If your readiness score was below 15, you're probably looking at real prep work. Here's a realistic timeline.

Data Layer Cleanup: 4-8 Weeks #

Normalize your data model. Build consistent entity relationships. Ensure your main tables are queryable with sub-second latency. This is the foundation everything else sits on.

API Surface Expansion: 4-12 Weeks #

Document existing endpoints with OpenAPI specs. Build API equivalents for any action that's currently UI-only. Add proper pagination, filtering, and error handling.

Auth and Security Hardening: 2-4 Weeks #

Ensure every API endpoint enforces the same permissions as your UI. Add row-level access control if you don't have it. Build audit logging for AI-initiated actions.

UX Pattern Development: 2-6 Weeks #

Build preview/approve workflows. Add streaming response display. Create UI containers that can render dynamic, AI-generated content alongside your existing interface.

Total: 3-6 months if you're building from zero. Less if you only need to close specific gaps.

Or, if you take the platform path, you can skip most of this. Embedding a solution like Gigacatalyst typically takes about two weeks, because the platform adapts to your existing APIs rather than requiring you to build new ones5.

Should You Build AI Features or Embed a Platform? #

The build-vs-embed decision comes down to three questions. How complete are your APIs today? How many engineering months can you spare? And how quickly do your customers need AI capabilities?

If you have strong APIs, a dedicated AI team, and a 6+ month timeline, building custom AI features makes sense. You'll have full control and deep integration.

If your APIs are decent but your engineering bandwidth is limited, or you need to ship in weeks instead of quarters, the platform approach wins. You trade some control for dramatic speed.

Most SaaS companies in 2026 are choosing a hybrid: embed a platform for broad AI customization, then build targeted AI features for their core differentiators. That way, customers get personalized AI apps immediately while your engineering team focuses on the AI capabilities only you can build.

See Gigacatalyst in Action

We build the AI platform layer that sits on top of B2B SaaS products and lets customers create their own workflow apps. Connected to real data. Governed by your security model.

The Bottom Line #

Your SaaS product is probably closer to AI-ready than you think. The question isn't whether you have perfect data or flawless APIs. It's whether you're willing to honestly assess where you are, fix the biggest gaps, and choose the path that matches your timeline and resources.

Score yourself. Fix the weakest link. Ship something real.

The SaaS companies that win in 2026 won't be the ones with the fanciest models. They'll be the ones whose customers actually use AI features every day, because those features match how each customer works.

That's readiness. Not a technology checklist, but a customer outcome.

Footnotes #

-

Gartner. "Gartner Predicts 40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026, Up from Less Than 5% in 2025." https://www.gartner.com/en/newsroom/press-releases/2025-08-26-gartner-predicts-40-percent-of-enterprise-apps-will-feature-task-specific-ai-agents-by-2026-up-from-less-than-5-percent-in-2025 2025. ↩ ↩2

-

Gartner. "Lack of AI-Ready Data Puts AI Projects at Risk." https://www.gartner.com/en/newsroom/press-releases/2025-02-26-lack-of-ai-ready-data-puts-ai-projects-at-risk 2025. ↩ ↩2

-

McKinsey & Company. "The State of AI in 2025: Agents, Innovation, and Transformation." https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai 2025. ↩

-

Forbes. "Why 95% Of AI Pilots Fail, And What Business Leaders Should Do Instead." https://www.forbes.com/sites/andreahill/2025/08/21/why-95-of-ai-pilots-fail-and-what-business-leaders-should-do-instead/ 2025. ↩

-

Gigacatalyst internal data. Production deployment metrics, 2025-2026. ↩ ↩2

-

BCG. Enterprise AI adoption and data governance challenges. 2025. ↩