The easiest API to work with is one that was never meant to exist.

I was integrating with a platform last month. Millions of users, real product, years of traction. I needed basic profile data: name, bio, avatar, social links. The kind of thing any API returns in 200ms.

There was no API. Not a bad one. Not a rate-limited one. No API at all. Server-rendered PHP, every page returns HTML, and Cloudflare blocks curl before it reaches the server. I probed 14 plausible endpoints. All 404:

const candidates = [

'/api/profile', '/api/user/me', '/api/user',

'/api/blocks', '/api/social', '/api/links',

'/api/v1/profile', '/api/v1/user/me',

];

Promise.all(candidates.map(async url => {

const r = await fetch(url, { credentials: 'include' });

const text = await r.text();

return { url, status: r.status, json: text.startsWith('{') };

})).then(results => console.table(results));

// Every row: status 404, json falseMost developers would stop here. File a partnership request, wait weeks, maybe get a CSV export.

I think that's the wrong instinct.

The page is the API response #

PHP powers 76.2% of all websites with a known server-side language1. That's three-quarters of the web running on a technology that predates REST APIs by a decade. These apps have to get data to the browser somehow. They just do it differently.

A modern React app fetches /api/user after mount. A PHP app from 2014 prints the data directly into the HTML template before the page loads. Both approaches deliver the same information to the same place. The PHP approach is, counterintuitively, easier to extract from. Everything arrives in one request. No async loading, no skeleton screens, no waiting for JavaScript to run.

I started calling this technique DOM archaeology: treating rendered HTML as a structured data source. It's not scraping. Scraping implies fragility, regex, broken parsers. This is closer to reading a response payload that happens to be formatted as HTML instead of JSON.

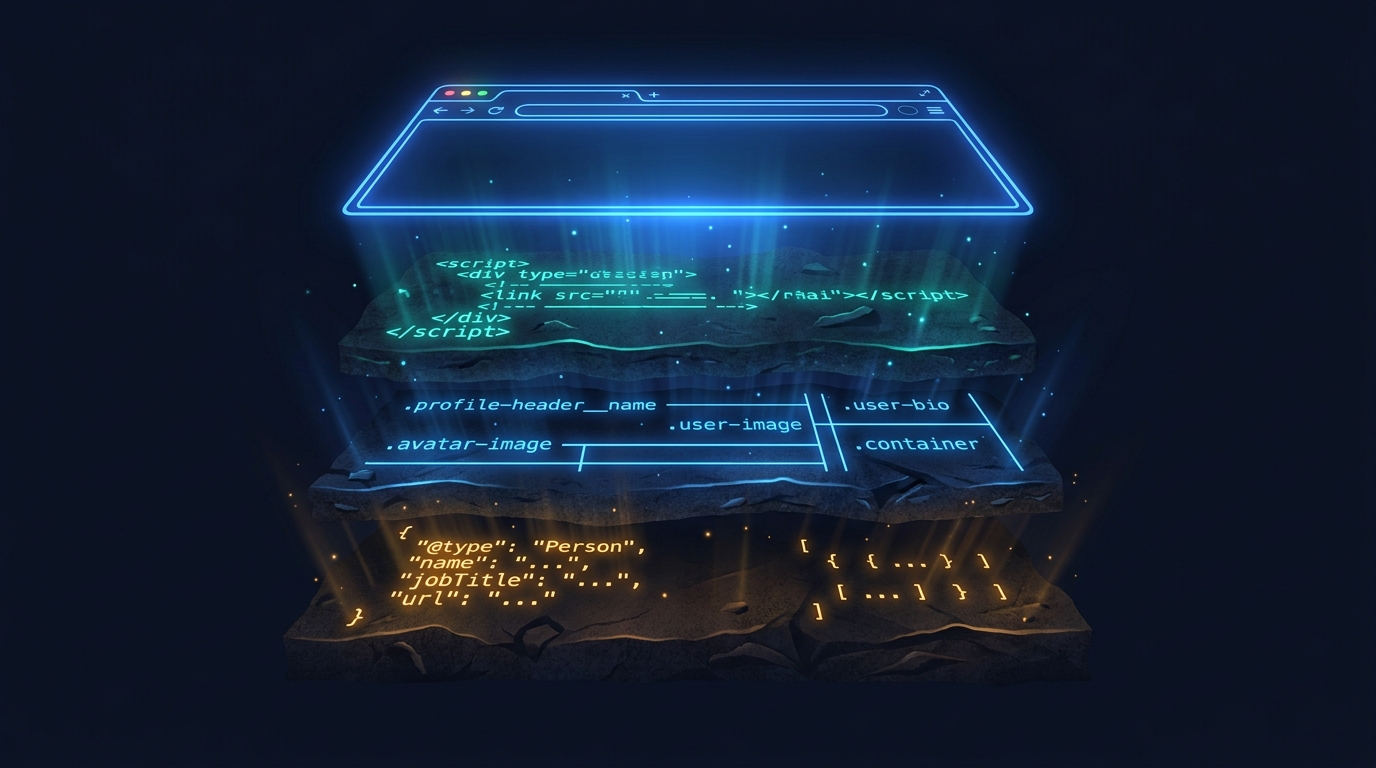

Here are the three layers of data buried in every server-rendered page, ranked by reliability.

Layer 1: Inline script tags #

This is the one most people miss.

PHP apps routinely serialize server-side data as JSON inside <script> tags. The frontend JavaScript needs configuration at boot time: user IDs, session tokens, feature flags, permissions. The server bakes all of it into the page so the JS doesn't need a separate fetch.

const scripts = [...document.querySelectorAll('script:not([src])')];

const config = scripts

.map(s => { try { return JSON.parse(s.innerText); } catch { return null; } })

.filter(Boolean);

console.log(config);On the platform I was working with, one of those script tags contained:

{

"profileId": 1632590,

"csrf": "b194d1d1e0f0664b...",

"creditBalance": 0,

"featureFlags": { "aiEnabled": false },

"sessionConfig": { "timeout": 3600 }

}The CSRF token needed for authenticated writes. The numeric profile ID. Feature flags showing what's enabled. All sitting in a script tag because the frontend needed it.

If you need a specific key, target it:

const configScript = [...document.querySelectorAll('script:not([src])')]

.find(s => s.innerText.includes('profileId'));

const data = JSON.parse(configScript?.innerText || 'null');I suspect most PHP apps have between two and ten inline JSON blobs on any given page. Wordpress embeds wpApiSettings. Shopify embeds the entire product catalog on collection pages. Laravel Blade templates routinely inject @json() blocks. The pattern is everywhere because the need is universal: the frontend needs server data at boot time.

Layer 2: CSS class names as a data schema #

BEM naming conventions turn CSS classes into documentation. A class called profile-header__display-name is telling you, in plain English, that this element contains the display name inside a profile header component.

Dump every class name in the document:

const classes = [...new Set(

[...document.querySelectorAll('[class]')]

.flatMap(el => [...el.classList])

.filter(c => c.length > 2)

)].sort();

console.log(classes.join('\n'));On a typical profile page this produces something like:

profile-header__avatar

profile-header__bio

profile-header__display-name

link-item

link-item__description

link-item__icon

link-item__title

social-icon

social-icon__platform

social-icon__url

Read that list and you've reverse-engineered the data model without looking at a single line of server code. There's a profile-header with avatar, bio, display name. There are link-item entries with titles and descriptions. There are social-icon entries with platforms and URLs.

Build your selectors from the class names:

const profile = {

name: doc.querySelector('.profile-header__display-name')?.innerText?.trim(),

bio: doc.querySelector('.profile-header__bio')?.innerText?.trim(),

avatar: doc.querySelector('.profile-header__avatar img')?.src,

links: [...doc.querySelectorAll('.link-item')].map(el => ({

title: el.querySelector('.link-item__title')?.innerText?.trim(),

url: el.querySelector('a')?.href,

})),

socials: [...doc.querySelectorAll('.social-icon')].map(el => ({

platform: el.dataset.platform || el.dataset.iconName,

url: el.href,

})),

};One caveat. Class names change on redesigns. They're reliable for the initial integration, and they're how you discover the data model. But you want a fallback for anything critical. Which leads to the most stable source.

Layer 3: Schema.org JSON-LD #

Almost every server-rendered app includes Schema.org structured data in the page head. It's there for SEO: Google uses it for rich results, knowledge panels, search features. The team maintains it because breaking it hurts their search rankings.

That makes it the most stable data source on the page.

const schema = JSON.parse(

document.querySelector('script[type="application/ld+json"]')?.innerText || 'null'

);A profile page typically yields:

{

"@type": "ProfilePage",

"mainEntity": {

"@type": "Person",

"name": "Jane Smith",

"url": "https://example.com/janesmith"

},

"dateCreated": "2024-01-15T10:22:00+00:00",

"dateModified": "2026-04-23T19:16:48+00:00"

}The canonical name, profile URL, creation date, last modified timestamp. For a product page you'd get price and availability. For a restaurant, hours and address. The platform put this data here deliberately, formatted it according to a public spec, and actively maintains it.

When the CSS class for "display name" changes in a redesign, the Schema.org mainEntity.name almost certainly won't. Use it as your foundation, not an afterthought.

The complete extraction function #

Chain all three layers with fallbacks:

async function extractProfileData(profileUrl) {

const html = await fetch(profileUrl, { credentials: 'include' }).then(r => r.text());

const doc = new DOMParser().parseFromString(html, 'text/html');

// Layer 3: Schema.org (most stable)

const schema = JSON.parse(

doc.querySelector('script[type="application/ld+json"]')?.innerText || 'null'

);

// Layer 1: Inline script config (richest data)

const config = JSON.parse(

[...doc.querySelectorAll('script:not([src])')]

.find(s => s.innerText.includes('userId') || s.innerText.includes('profileId'))

?.innerText || 'null'

);

// Layer 2: CSS selectors (most fields) + data-* attributes

return {

name: doc.querySelector('[class*="display-name"]')?.innerText?.trim()

|| schema?.mainEntity?.name,

bio: doc.querySelector('[class*="__bio"]')?.innerText?.trim() || '',

avatar: doc.querySelector('img[src*="/avatars/"]')?.src,

socials: [...doc.querySelectorAll('a[data-platform], a[data-icon-name]')]

.map(el => ({ platform: el.dataset.platform || el.dataset.iconName, url: el.href }))

.filter(s => s.url && s.platform),

userId: config?.profileId?.toString()

|| doc.querySelector('[data-user-id]')?.dataset?.userId,

csrf: config?.csrf,

createdAt: schema?.dateCreated,

updatedAt: schema?.dateModified,

};

}The stability hierarchy: Schema.org for fields that matter most, inline JSON for configuration and auth tokens, CSS selectors for everything else, data-* attributes as a bonus layer for IDs and state.

Why legacy apps are actually easier #

Here's the part that surprised me. Modern SPAs are harder to extract data from. A React app renders a loading spinner, then fetches data from an API, then updates the DOM. You'd need to wait for JavaScript execution, intercept XHR calls, or run a headless browser.

A PHP app from 2014? Everything is in the initial HTML. One request, one parse, all the data. No JavaScript execution required. DOMParser handles the whole thing in under 100ms.

The older the app, the more data it puts in the page. The more data it puts in the page, the more you can extract. Server-side rendering, which the industry spent years moving away from, turns out to be the most integration-friendly architecture ever built.

76% of the web works this way. The techniques apply to Wordpress, Shopify, Laravel, Drupal, every custom PHP codebase. The specific selectors change but the three layers are always there: inline scripts, semantic class names, schema.org markup.

The data was always in the browser. We just forgot to look.

Building on top of messy systems?

We build AI-powered tools that work with your existing data layer, whatever shape it's in.

Footnotes #

-

W3Techs. "Usage Statistics of Server-side Programming Languages for Websites." https://w3techs.com/technologies/overview/programming_language. April 2026. ↩