Project management software added more AI features in 2024-2025 than in the prior decade combined. Every major platform, Jira, Asana, Monday.com, Linear, ClickUp, Notion, and Basecamp, shipped AI writing assistants, task generators, status summarizers, and natural language query tools. The marketing all sounds the same. The results in production are not.

Some of these features are genuinely changing how engineering teams, operations teams, and marketing teams run projects. Most are being tried once and then ignored. The difference isn't the quality of the AI. It's whether the feature is solving a problem teams actually have in the middle of their real workflow, or solving a problem product managers imagined they had from behind a desk.

This is a review of the AI feature categories across project management software in 2026: which ones have real adoption, for which team types, and what to evaluate before buying or upgrading.

Key Takeaways

- The global project management software market reached $7.8 billion in 2025 (Gartner, 2024)1

- AI task generation and sprint planning assistance have the highest real-world adoption among engineering teams

- AI status updates and meeting summary features are widely used but show limited impact on project outcomes

- Natural language project queries have strong demo appeal but low daily usage in most team types

- Teams with the highest AI feature adoption are those that built custom workflow views matching their specific process

The Project Management Software Landscape in 2026 #

The global project management software market reached $7.8 billion in 2025, with AI-enabled features cited as the primary driver of platform upgrades.1 The market segments into several distinct tiers.

Engineering-focused platforms: Jira (Atlassian), Linear, and GitHub Projects dominate software development teams. Their AI features are oriented around sprint management, bug triage, and code-to-task linking.

Cross-functional platforms: Asana, Monday.com, ClickUp, and Notion serve marketing, operations, design, and product teams. Their AI features span a wider range of use cases but sometimes go less deep on any single one.

Lightweight platforms: Basecamp, Trello, and Todoist prioritize simplicity. Their AI additions are more conservative, reflecting their audience's preference for low complexity.

The key insight for evaluating AI features: the same feature has very different adoption rates across these tiers. An AI sprint planning tool built for engineering teams delivers different value to a marketing campaign manager. Understanding which features were designed for which team type prevents expensive disappointment.

AI Task and Story Generation: Strong for Engineering, Variable for Others #

AI-powered task and user story generation is the most consistently adopted AI feature in project management, specifically for engineering teams. Jira's AI features for story generation take a brief description or design spec and break it down into component tasks with acceptance criteria. Linear's AI does similar work with issues and project briefs.

For senior engineers and product managers, the value is in reducing the time spent on administrative task decomposition. Writing 15 well-structured user stories from a product spec used to take 45-90 minutes. AI reduces that to 10-15 minutes of review and refinement. That's real time saved on non-creative work.

The adoption pattern narrows significantly outside engineering. Marketing teams, operations teams, and agencies find AI task generation less useful because their project structures are more variable and context-dependent. A marketing campaign's task list depends on channel strategy, agency relationships, and brand guidelines that AI can't infer from a brief.

What's working: Engineering teams running agile sprints, product teams decomposing feature specs, QA teams generating test case outlines from acceptance criteria.

What's not: Creative and marketing teams whose project structures are too contextual for generic task generation. Operations teams whose workflows are more sequential than agile.

AI Status Summaries and Meeting Notes: Widely Used, Modest Impact #

Almost every major project management platform now offers AI-generated status summaries: scan the project board and produce a "here's where things stand" paragraph. Asana's AI status updates, Monday.com's AI summaries, and Notion AI's project digests all do this.

These features see high initial adoption and moderate ongoing use. The problem they solve is real: writing a project status update is tedious, and the first person to see an AI-generated one that accurately describes their project's status feels genuine delight.

The long-term adoption issue is quality consistency. AI summaries are accurate when projects are well-maintained in the tool. When they aren't (and most projects in most organizations aren't perfectly maintained), AI summaries surface stale information confidently, which creates more confusion than a human writing "I need to check on this" would.

Meeting notes integration, where tools like Notion, ClickUp, and Asana pull from Zoom or Google Meet recordings to create task lists, has better outcomes when the meeting structure is consistent.

What's working: Engineering standups where task status is reliably updated in the tool. Weekly leadership reviews where the project board is the source of truth. Teams using meeting notes AI for recurring formats (sprint retros, weekly syncs).

What's not: Creative reviews and stakeholder meetings with variable structure. Projects where status lives partially in email and Slack rather than in the project tool.

AI Dependency and Risk Analysis: High Value, Low Adoption #

Dependency analysis and risk flagging, where AI identifies tasks on the critical path, flags schedule risks, and surfaces inter-project conflicts, is the AI feature with the highest potential impact and the lowest current adoption.

The capability exists in enterprise tiers of Jira (Advanced Roadmaps with AI), Microsoft Project Copilot, and several Asana Business features. When it works, it catches the kind of problem that typically surfaces in a status meeting three weeks before a deadline: "we can't start Task B until Task A finishes, and Task A is delayed, which means..."

The adoption barrier is data quality again. Dependency analysis is only as good as the task relationships entered in the system. Most project teams enter task relationships inconsistently, making AI risk predictions unreliable. Teams that invest in maintaining clean dependency data see real value from this feature.

What's working: Engineering teams with mature Jira configurations, portfolio managers at companies where project interdependency is a recurring headache, PMOs in construction or professional services where task sequencing is critical.

What's not: Smaller teams where project complexity doesn't justify the data maintenance overhead. Creative projects where dependencies are informal and constantly shifting.

Natural Language Project Queries: Strong Demos, Low Daily Use #

"Ask your project manager anything" features, where you can type "what's blocking the Q2 launch?" or "which tasks are more than a week overdue?" in natural language, appear in Asana AI, Monday.com AI, and Notion AI.

These features generate strong demo reactions. The idea that you can ask a plain language question and get a project answer is compelling. In practice, daily use is low across most team types for a consistent reason: the users who need to ask these questions are the same users who already know where to look in the project tool. Power users query their boards. New users don't know enough context to ask good questions.

The exception is executive reporting. Leadership teams who need periodic project snapshots but don't live in the tool daily use natural language queries more consistently. The feature reduces the friction of "let me pull up the board and find the right filter" for occasional users.

What's working: Leadership reviews where executives need project status without navigating the full tool. Onboarding periods where new team members can ask questions to orient themselves.

What's not: Daily project management for power users who already know their workflow. Teams where the bottleneck is project execution, not project visibility.

AI-Powered Workflow Automation: The Underrated Feature #

While most AI feature attention goes to task generation and natural language queries, AI-powered workflow automation is quietly producing the most consistent ROI in project management.

ClickUp's AI automations, Monday.com's AI workflow builder, and Jira's Automation with AI condition evaluation let teams describe triggers and actions in plain language: "when a task moves to review, assign it to the person who last commented and notify the project lead." Setting up these automations used to require navigating automation rule builders with specific syntax. Natural language input reduces setup time by 70-80% for common automation patterns.

The value compounds. Every hour saved on manual task routing, status updates, and handoff notifications is an hour back for actual project work. Unlike AI summarization (which depends on data quality) or natural language queries (which require specific use cases), automation value scales with project volume.

What's working: Engineering teams automating sprint transitions and bug routing. Marketing teams automating content approval workflows. Operations teams automating recurring task creation and status notifications.

What's not: Highly variable projects where automation rules change every sprint. Small teams where manual coordination is faster than automation setup.

What Teams With the Best Adoption Have in Common #

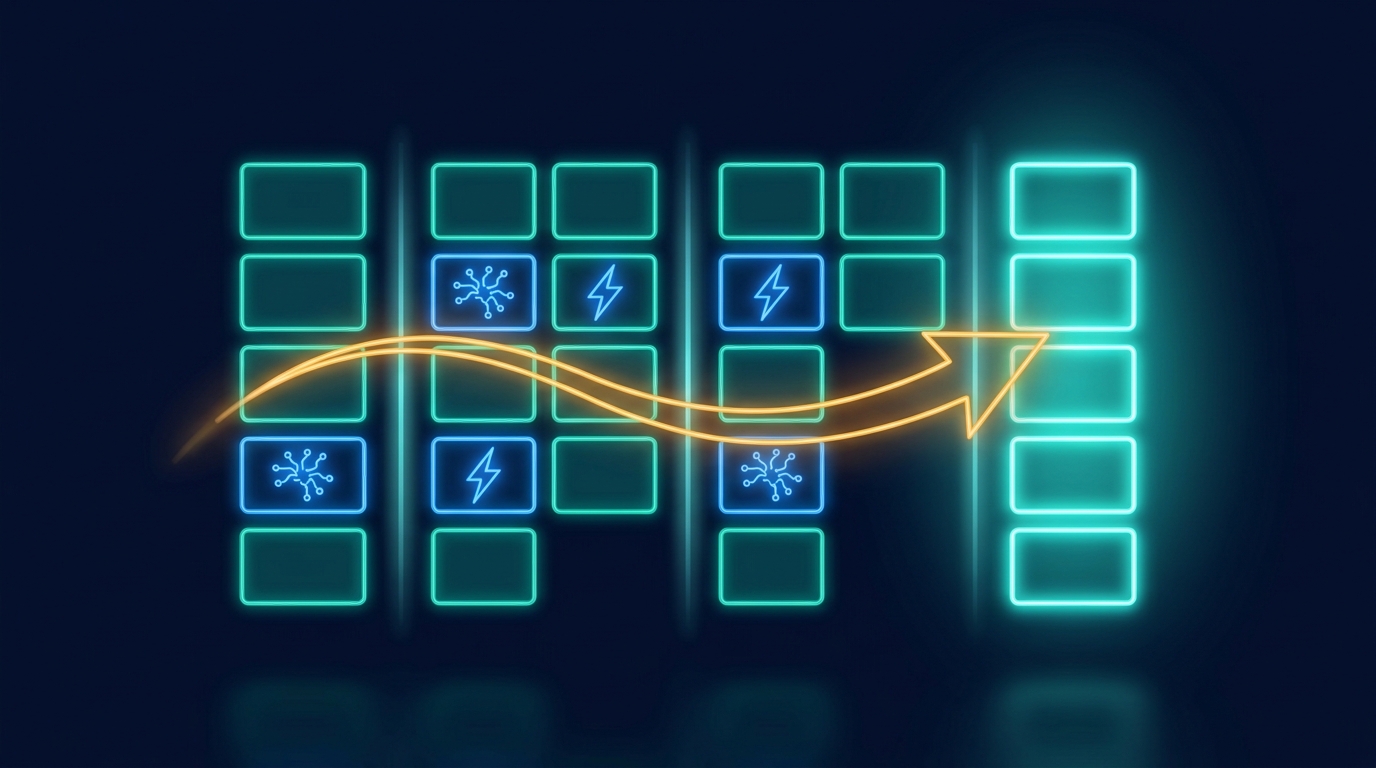

The project management teams with the highest AI feature adoption in 2026 share a pattern that vendor marketing rarely highlights: they didn't just turn on AI features. They built custom workflow views inside their project tool that match how their specific team actually works.

An engineering team that configures their sprint board to show exactly the fields, filters, and metrics relevant to their process uses AI features more, because those features have better context to work with. An operations team that builds a custom project template matching their actual workflow gets better AI task generation because the structure is specific, not generic.

The AI feature adoption gap between teams often isn't the features. It's the workflow fit underneath them. Generic project views produce generic AI outputs. Workflow-specific views produce specific, useful outputs.

This is the same pattern that appears across B2B SaaS: per-customer workflow fit drives adoption, and AI is only as useful as the workflow context it operates within.

Build Workflow-Specific Apps for Your Project Teams

Gigacatalyst lets your customers build custom workflow apps on top of your project management platform. Per-team views, role-specific dashboards, and workflow automations matched to how each team actually runs projects.

Frequently Asked Questions #

Which project management tool has the best AI features in 2026?

For engineering teams: Linear has the best AI for issue management and sprint planning, while Jira leads for enterprise-scale dependency and portfolio management. For cross-functional teams: Asana and Monday.com have the most polished AI features for non-engineering use cases. For knowledge-work teams: Notion AI has the best integration between project management and documentation. The right choice depends on team type and which AI category addresses your primary bottleneck.

Do AI features in project management tools improve delivery times?

The data is mixed. AI task generation and automation consistently save hours per sprint on administrative work. AI status summaries and natural language queries show minimal impact on delivery metrics. McKinsey's research on AI productivity tools found that automation-type features (which reduce manual coordination) produce more consistent ROI than generative-type features (which assist with creation).2 The implication: prioritize AI automation features over AI writing features for project management ROI.

Is it worth upgrading to a higher tier for AI project management features?

Evaluate by feature category, not by tier. AI task generation and automation features often justify tier upgrades for teams running 5+ active projects simultaneously. AI status summaries and natural language queries rarely justify tier upgrades on their own unless leadership reporting is a significant time cost. Calculate the upgrade cost against the specific feature you'd use daily, not against the full AI feature set in the tier.